A call center system no one fully understood

While at DMI Consulting, I was brought in by Optum — the healthcare services arm of United Healthcare — to assist Triple-S Salud, a major Puerto Rico-based health insurer, with a complex operational challenge. Their call centers were fielding a high volume of calls from Medicare, Medicaid, and Private insurance recipients, as well as doctors, healthcare providers, and pharmacists — each type of caller requiring a different set of processes, systems, and institutional knowledge to resolve.

The problem wasn't that call center workers were underperforming. It was that the systems, processes, and coding standards they relied on had been built — and rebuilt — across three distinct insurance lines, each with its own technology stack and workflow. The environment workers were operating in had never been designed holistically. It had accumulated over time, and the people doing the work had quietly learned to work around it.

"If you gather data from users and then incorporate your findings into your product design, you'll be more likely to meet their true needs. In return, they will probably like the end result better and become more efficient at using it."

Getting into the environment, not just the meeting room

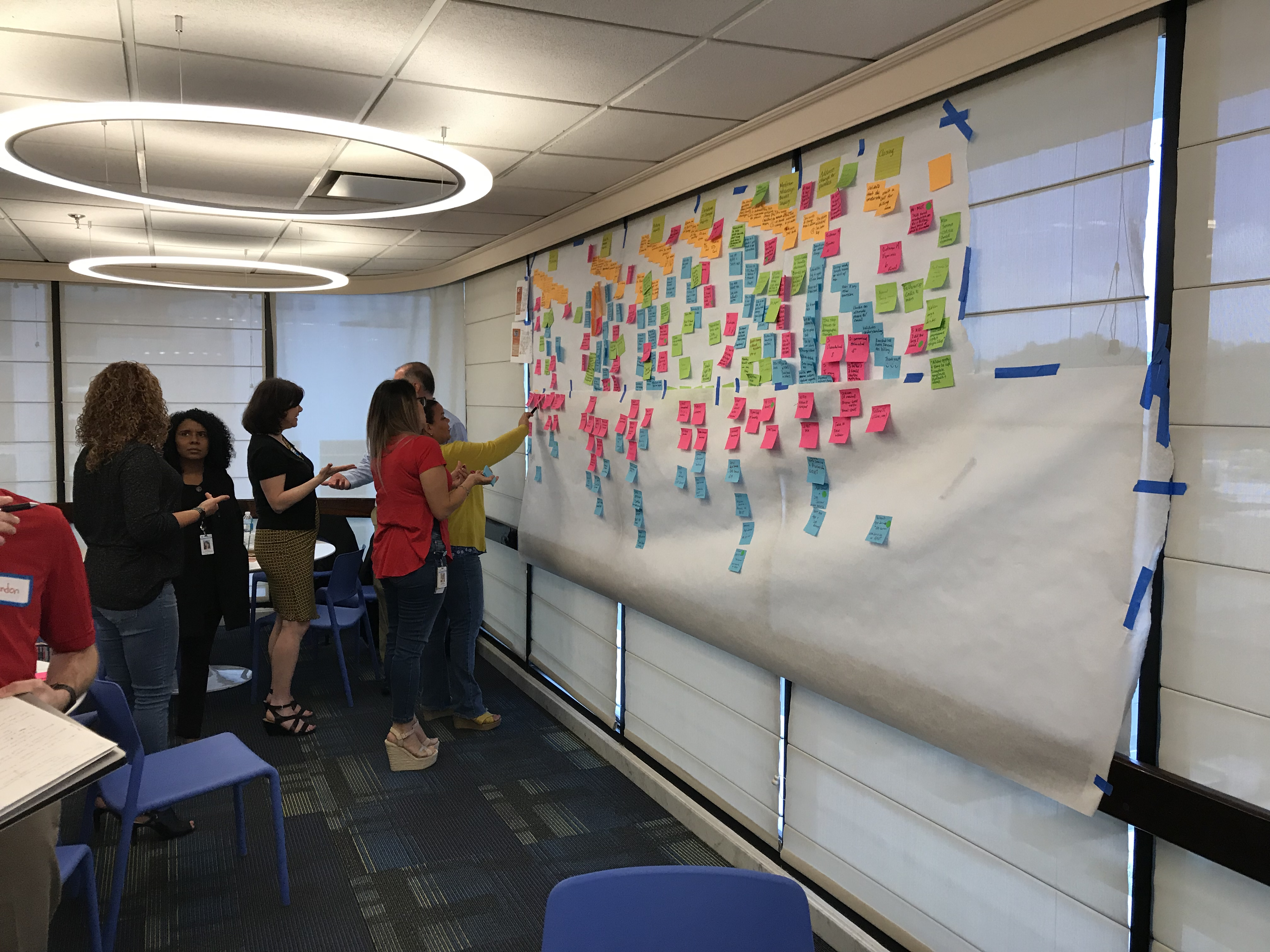

This project wasn't something that could be understood from a conference room. I conducted call center site visits to observe workers in their actual environment — watching how they navigated between systems, handled mid-call lookups, and managed the cognitive load of fielding complex insurance queries in real time. Observation revealed things that interviews alone never would: the small adaptations, the workarounds, the moments of hesitation that indicated where the system was failing the person using it.

Alongside the site visits, I ran in-person workshops with stakeholders and subject matter experts, working collaboratively with DMI's business process consultants to map the full scope of the problem. User interviews with call center representatives provided the qualitative depth to complement what observation had surfaced — giving workers the space to articulate pain points in their own language, not just the language of the process documentation.

Giving the research a human face

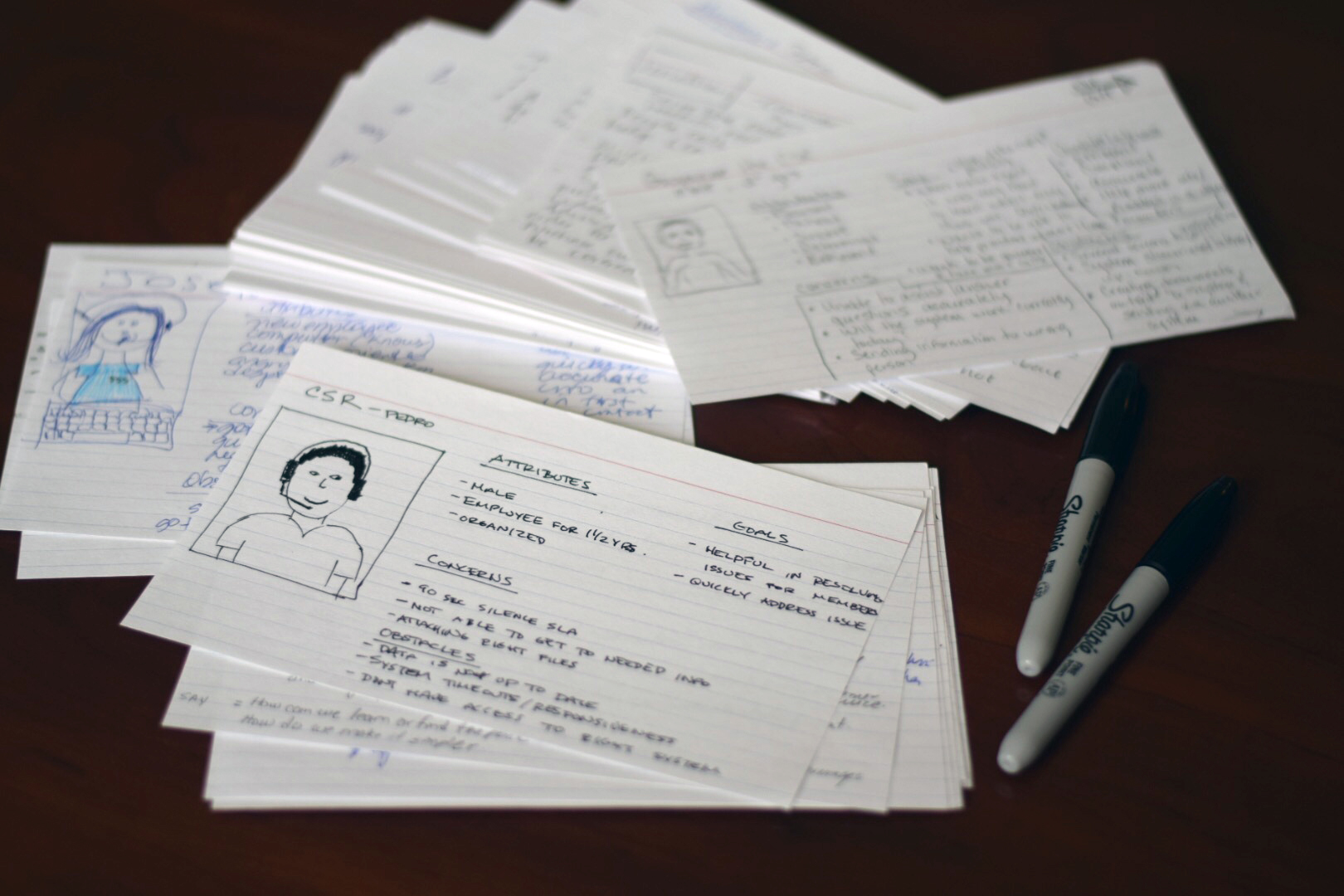

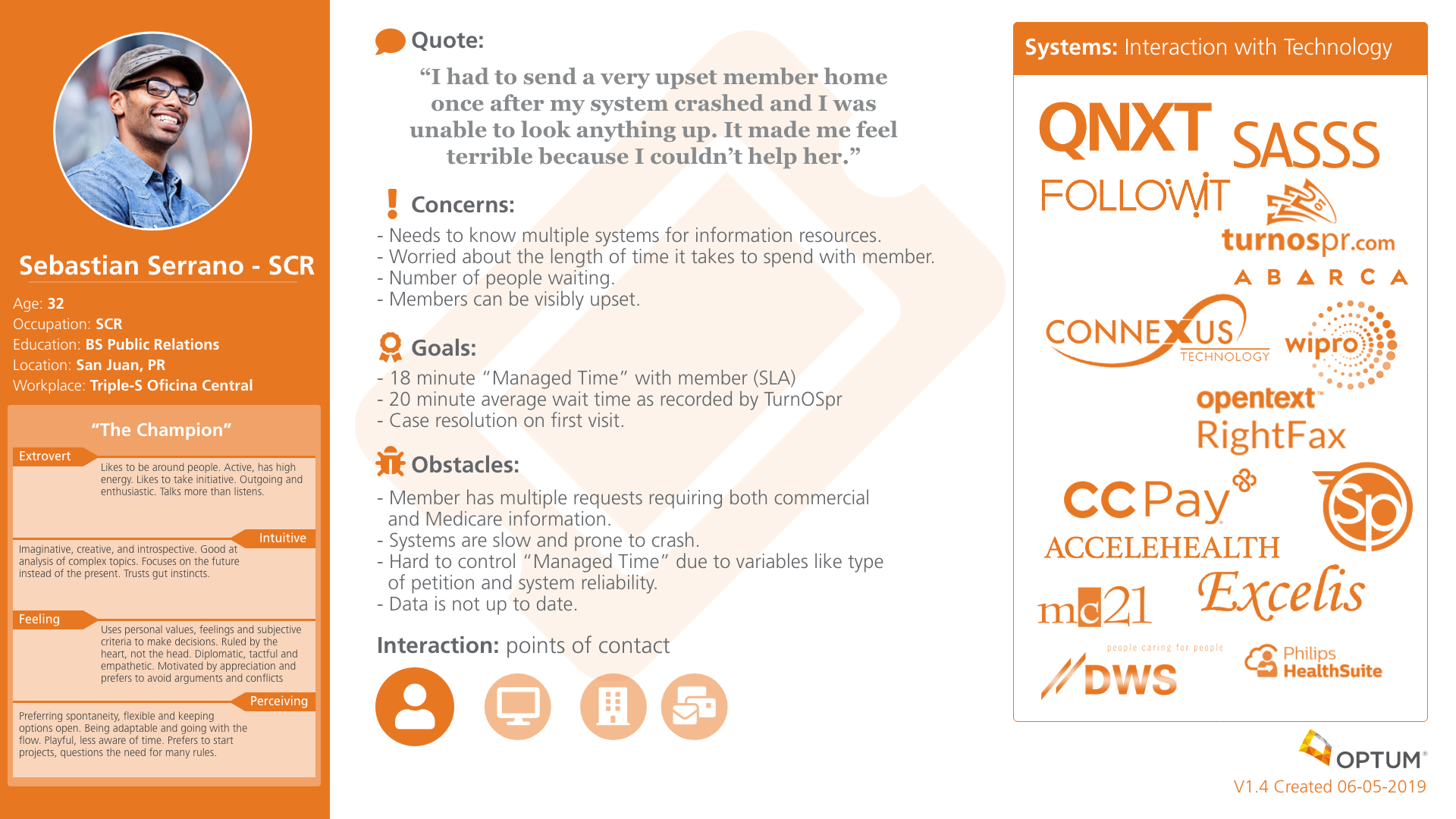

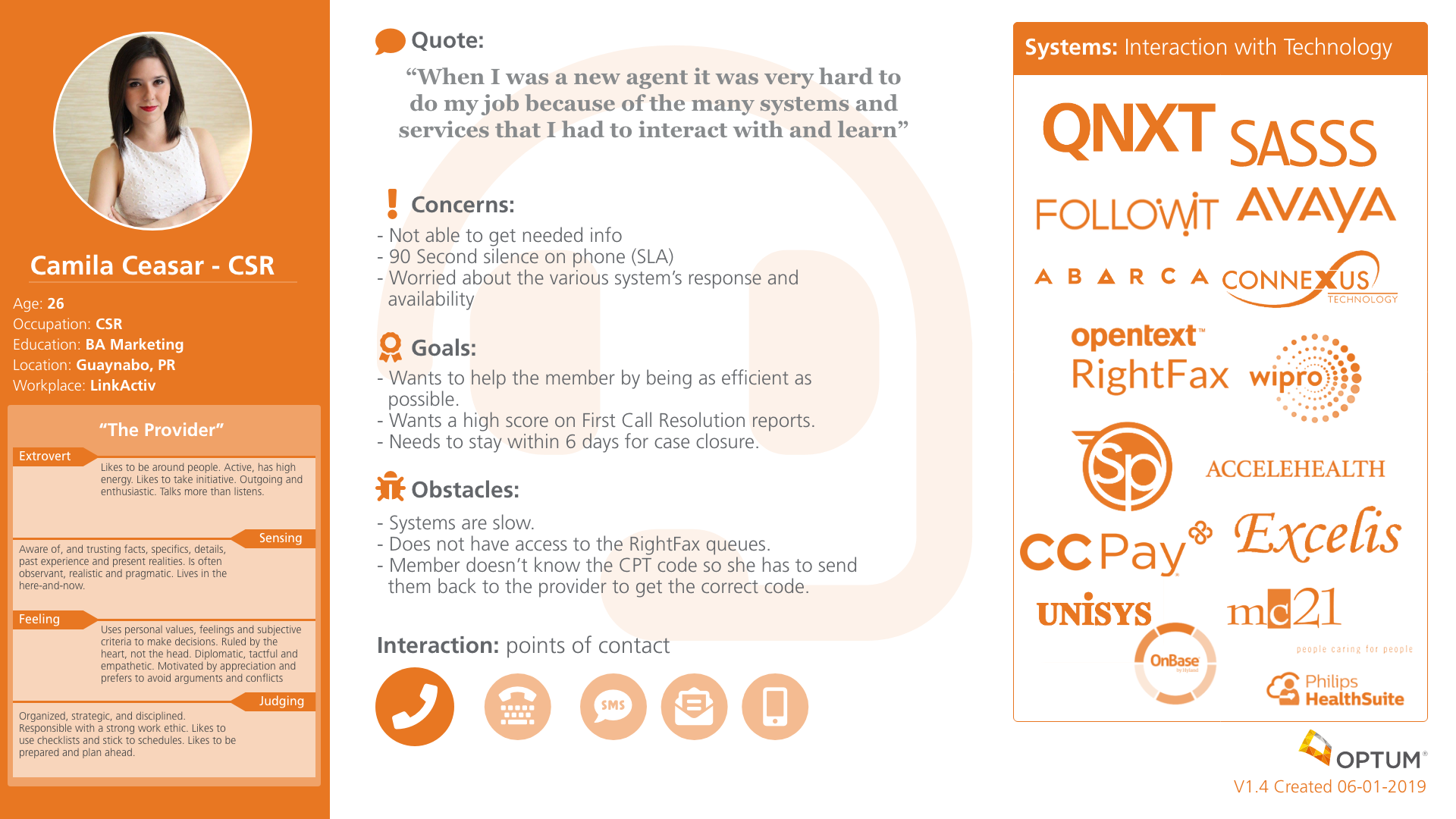

Personas are realistic descriptions of the people at the center of a design challenge — not abstract user types, but believable representations grounded in real data. For this project, two primary personas were developed to represent the distinct roles within the call center environment: the SCR (Service Center Representative) handling incoming calls, and the CSR (Customer Service Representative) navigating the operational side of case resolution.

These personas served a critical function beyond research documentation. They replaced "I think users want..." with "Our SCR persona needs..." — shifting stakeholder conversations from assumption-based opinion to user-centered evidence. Each persona was built from a combination of site visit observations, interview data, and workshop synthesis.

Mapping the experience from every angle

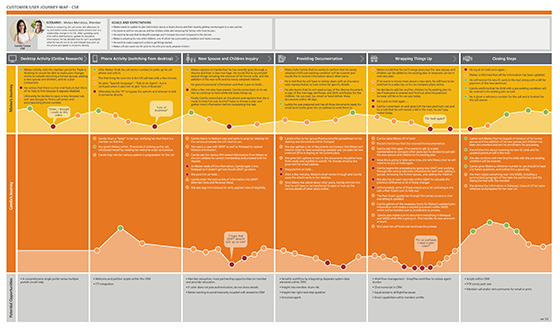

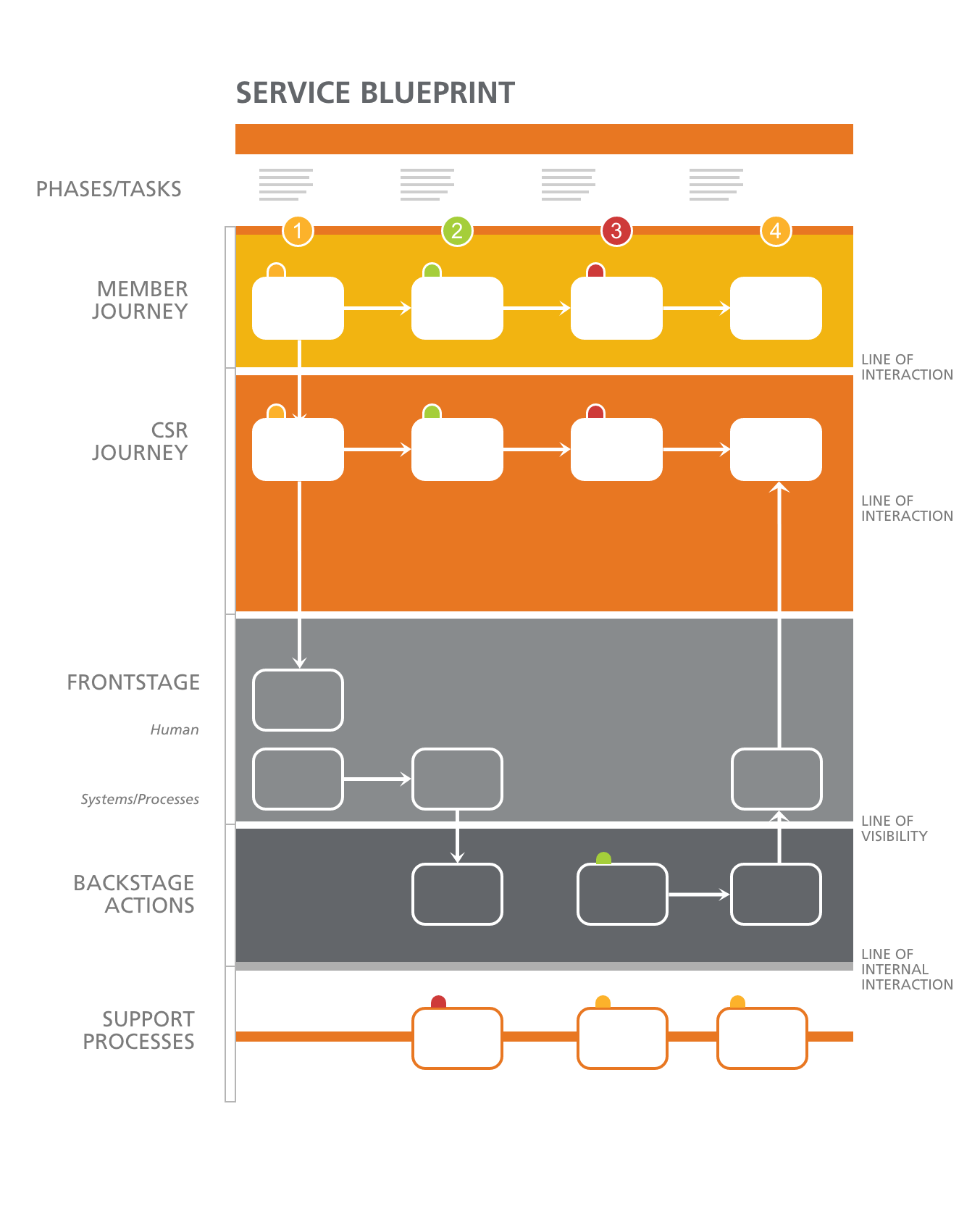

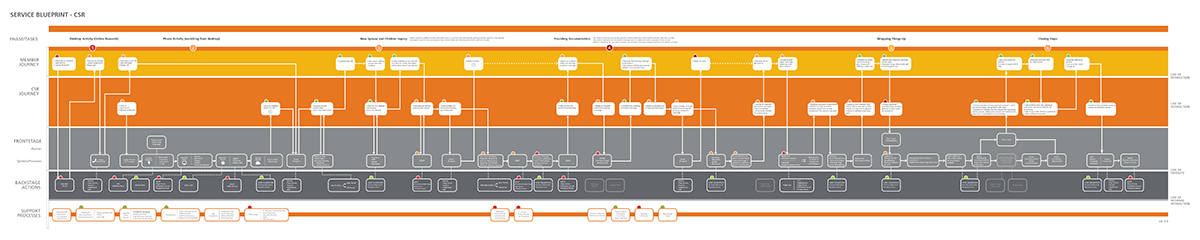

With personas established, the research needed to be translated into artifacts that could show — visually and systematically — where the experience was breaking down and why. Customer journey maps documented the series of interactions between call center workers and the systems, supervisors, and callers they engaged with — capturing emotional highs, lows, and the moments where friction compounded into failure. Service blueprints went deeper: mapping all the variables and relationships between service components directly tied to those touchpoints.

The service blueprints were where the most critical findings emerged. Documenting the full back-stage and front-stage processes for each insurance line made the inconsistencies impossible to ignore — and provided a precise record of where individual workers had built personal workarounds to bridge the gaps the system had never closed.

Three insurance lines. Zero consistent systems.

The research uncovered a systemic problem that went deeper than UI inconsistency. Medicare, Medicaid, and Private insurance operations had each been built independently — with different technology platforms, different UI patterns, different process flows, and critically, different coding systems. A call center worker handling all three lines wasn't moving between views of the same system. They were switching between entirely different operational environments, each requiring its own mental model.

The most telling finding wasn't in any system audit — it was in the site visits. Workers had developed their own personal workarounds to bridge the gaps their tools had never closed. Sticky notes. Personal reference sheets. Unofficial shorthand. These weren't signs of individual failure; they were evidence of a system that had forced intelligent, experienced people to build unofficial infrastructure just to do their jobs. Each workaround was catalogued, mapped to its corresponding service blueprint touchpoint, and presented as a diagnostic indicator of where process improvement was most needed.

Research that moved the operational needle

The UX research deliverables — personas, journey maps, and annotated service blueprints — gave Optum and Triple-S Salud a precise, evidence-based picture of where their call center operations were losing time and why. The service blueprints in particular became the primary tool for cross-functional alignment: a shared visual document that business process consultants, operations leadership, and technology teams could all read from the same page.

The recommendations focused on three areas: standardizing UI and process patterns across insurance lines, normalizing the coding systems that workers were forced to mentally translate, and formalizing the most effective worker-invented workarounds into official process documentation — turning fragile individual knowledge into institutional infrastructure.

What this project reinforced about service design in the field

The most important thing I did on this project was get out of the meeting room. Stakeholder workshops are essential for alignment, but they show you the organization's understanding of its own problems — which is not the same as the problems themselves. The site visits were where the real picture emerged. Watching a call center representative pause mid-call to consult a handwritten reference sheet they'd made themselves told me more about the system's failure modes than any process document could have. You can't design solutions to problems you've only heard described.

What I'd do again: treating every worker workaround as a signal, not a distraction. In most operational contexts, workarounds get dismissed as non-standard behavior. Here, they were the most precise diagnostic data I had. Each one mapped exactly to a gap in the official system — a place where the designed process had failed and human ingenuity had quietly patched over it. Cataloguing those workarounds and surfacing them as findings gave the recommendations a specificity and credibility that abstract process analysis couldn't have produced.